-

United States -

United Kingdom -

India -

France -

Deutschland -

Italia -

日本 -

대한민국 -

中国 -

台灣

-

-

产品组合

查看所有产品Ansys致力于通过向学生提供免费的仿真工程软件来助力他们获得成功。

-

How are autonomous systems able to observe the world around them? For starters, they rely on computer vision to perceive the surrounding environment. Computer vision works by analyzing and interpreting data from sensing and perception systems that detect physical stimuli — such as light, sound, heat, or radio frequency (RF).

In fact, for autonomous systems, collecting data via sensors and deciphering it via perception systems serves as their first functional step. For example, take an autonomous helicopter that transports supplies over a desert. To perform this function, the helicopter first needs to observe the terrain around it to make decisions about its flightpath.

That’s where sensing and perception technology comes in. Autonomous systems can use cameras, radar, lidar, thermal cameras, ultrasonic sensors, global positioning systems (GPS), inertial measurement units (IMUs), and more to gain information on external stimuli. They then use this information to make decisions.

The applications of these autonomous technologies in the aerospace and defense (A&D) industry are equally far-reaching. Uses range from a drone taking high-resolution pictures of the land far below itself while in flight to autonomous electric vertical takeoff and landing (eVTOL) vehicles using advanced real-time radar systems to detect other aircraft.

It’s important to mention that while sensing and perception systems are key hardware components in autonomous designs, there are other essential physical components. For example, engineers also need to consider connectivity and vehicle-to-everything (V2X) in their designs. Ensuring that autonomous systems have the hardware they need to consistently and effectively communicate to infrastructure, networks, other vehicles, and devices is imperative for many autonomous designs. Further, all these hardware components also need to be fully integrated into software components, such as control systems.

When designing this essential hardware, engineers throughout the A&D industry must focus on developing accurate and reliable integrated designs. As this technology becomes increasingly complex, designing these hardware systems will become even more of a hurdle that teams must push past to innovate in this space.

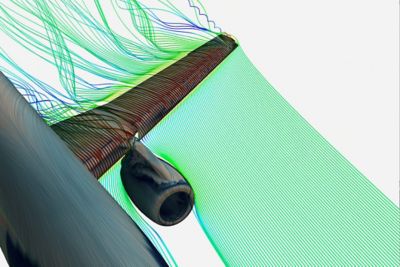

Radar systems use electromagnetic radio waves to detect objects and ranges in varied conditions

Four Sensing and Perception Technologies To Keep Your Eye On

Although the technology driving autonomy in A&D is varied and growing, there are four types of sensing and perception systems that are commonly found across the industry. Autonomous systems process the information gathered from these technologies and return it in a format that they can interpret and act upon based on pre-programmed actions.

1. Cameras

Autonomous systems use cameras to record and capture visual images of the world around them. As such, engineers focus on developing high-resolution cameras that can capture high-quality visual information for accurate perception and analysis, no matter the speed of the autonomous vehicle, distance between the camera and subject, weather conditions, or other external phenomenon such as sunlight or glare.

A few of the main challenges engineers face when designing cameras are achieving high-quality images in varying lighting conditions and balancing the needs for good resolution, frame rate, motion, and power consumption.

2. Lidar Systems

Light detection and ranging (lidar) is a remote sensing technology that uses light pulses to map an environment. In addition to autonomous systems, lidar is often used for topography analysis, mapping, and robotics. Autonomous vehicles use lidar systems to create detailed 3D maps and accurate object detection for enhanced perception.

To fulfill their function, lidar systems need to achieve high-resolution quality, function over large ranges, and ensure performance even in adverse weather conditions.

3. Radar Systems

Radio detection and ranging (radar) systems use electromagnetic radio waves for robust object detection and ranging. A few common applications of radar are air traffic control and weather forecasting. Radar systems are capable of reliable object detection and distance measurement in many different conditions.

Regarding challenges, radar systems need to ensure accuracy even in cluttered environments and must manage interference from other radar systems.

4. Thermal Cameras

Thermal cameras are used to detect temperature variations and thermal patterns and can enable autonomous systems to more easily perceive their surroundings in low-visibility settings, such as dark environments.

Engineers designing thermal cameras need to ensure that they can achieve high sensitivity and resolution while integrating thermal data with other sensor data.

Looking Ahead: Optimizing Sensing and Perception Technology in A&D

Engineers working on sensing and perception systems face a few main challenges, no matter their specific technology. These include optimizing the size, weight, power, and cost (SWaP-C) throughout the design and development process, ensuring that designs can function optimally even in the many types of platforms and applications across the A&D industry segments, and meeting critical safety and performance standards.

To overcome these hurdles, engineering simulation software has emerged as a necessary solution that enables you to:

- Use AI to optimize imaging systems and create more robust products using a comprehensive multiphysics approach that accounts for heat, vibration, deformation, and more

- Virtually validate and optimize antenna and array designs with ray tracing and RF analysis

- Integrate and validate systems in various conditions and realistic environments across air, land, sea, and space

- Increase efficiency and reduce time to market via scalability, more efficient testing, a reduced need for physical prototypes, and automation

Thanks to digital engineering, multidisciplinary teams of experts will be able to develop sensing and perception designs that are not only functional, but are also efficient, accurate, robust, and ready for deployment as quickly as possible.

A few examples of the leaps we may see in this space include:

- Cameras with enhanced visual perception, better multiwaveband imaging systems, and improved object recognition and tracking

- Radar with reliable detection in all weather conditions, enhanced distance measurement, object tracking, minimal weight, and ghost target elimination

- Lidar with precise 3D mapping and object detection, optimal placement, and enhanced navigation with obstacle avoidance

- Thermal cameras with improved perception in low visibility conditions and enhanced detection of thermal patterns and anomalies

To learn more about the future of autonomy in A&D, visit the visit the Autonomous Systems for A&D page for e-books, customer success stories, and technical solutions.

Just for you. We have some additional resources you may enjoy.

Learn More

Visit the Navigating the Future: Autonomous Systems for A&D page for e-books, customer success stories, and technical solutions.

The Advantage Blog

The Ansys Advantage blog, featuring contributions from Ansys and other technology experts, keeps you updated on how Ansys simulation is powering innovation that drives human advancement.